Many of us who do calculus using computers know the alternatives we have for differentiation.

The usual approach is to go for either of these

- Numerical

- Symbolic

- Automatic differentiation

Since, numerical methods (finite difference) are the most thought after methods when people think about computers and differentiation, we don't actually try other stuff. Forget symbolic, forget finite difference (and don't even think about automatic), we have a course on Power System Analysis, which is a bit computational in nature and we use a more traditional method.

1. Hand calculus

The problem we have is to find solutions of non-linear equations (load-flow equations) that give the values of voltages and power factors for a power transmission system. Idea is to use Newton's method and move towards the solution. Guess what, we find the Jacobian by hand and then program the expressions. Although this is perfectly fine, but this screws the whole idea of using computers.

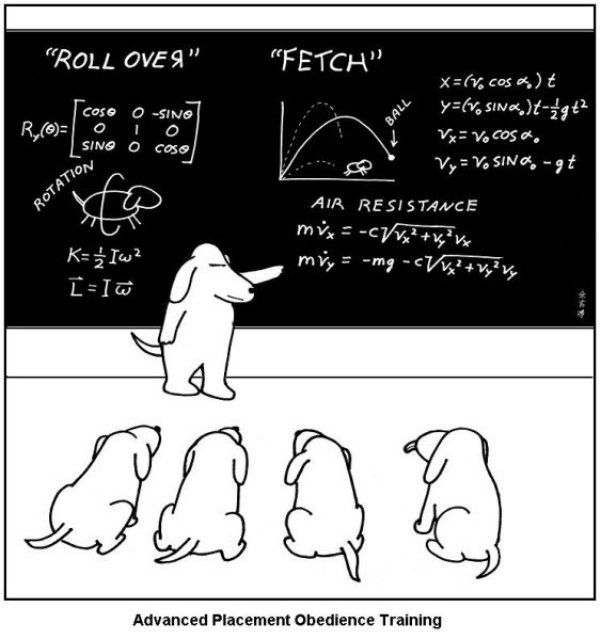

The situation is exactly as in the image below.

You are a dog, your brain is optimized to judge movements, still you will do the calculations just because this is the analytical way. This is the perfect what the hell ! moment. This is how you row a steamer.

It's not about this particular subject, almost everywhere, our course syllabuses are optimized for least efficient use of computers (if used anywhere). Obviously, you can switch over to your weapon of choice, but a lot depends on what the barrack relies on.

Consider AD (Automatic differentiation). You won't find a cooler method to do gradient computation. If you use Python, there is already an excellent library ad. And most of the people in machine learning community (extensive users of gradient computation) already favor AD (see Theano, kayak etc.) over the more error prone Symbolic and finite diff methods.

I don't see such spirit for power use of computers in our academia. The arguments people usually give here are:

You need to learn the Mathematics first.

Ah, you are already assume teaching Calculus in Mathematics was a waste of time.

You will learn nothing if you use functions directly.

C'mon, you were going to teach us finite difference stuff, weren't you ? Or wait, you did teach that, but that was erratic pen-paper stuff right? Computers are not vanity stuff. They are not disconnected from Science. Hate it or love it, Computation is not a projection of Mathematics on Computers, it is Mathematics.